Why the companies that define concepts end up shaping the answers AI systems generate

For most of the past two decades, digital strategy revolved around a relatively simple objective: rank the page. Search engines indexed documents, evaluated signals, and returned links. AI changes that dynamic by shifting competition from page visibility to explanation itself.

Search used to determine which pages people saw.

AI systems now determine how ideas are explained.

When someone asks an AI system to explain a concept, the system does not return a ranked list of documents. Instead, it generates a synthesized explanation. That explanation may draw from model training, retrieved documents, or both. In many cases, the user encounters the explanation before deciding whether to click a source at all.

This creates a new strategic layer.

The competition is no longer only for visibility.

The competition is for explanation itself.

This emerging discipline can be described as Knowledge Formation Optimization (KFO).

KFO is the strategic practice of increasing the probability that when AI systems explain a concept, they reproduce the concept’s original structure, language, and source association.

AI outputs remain probabilistic and vary across models.

The objective is not control.

The objective is explanatory stability.

The Explanation Layer

Traditional digital competition operates at the document layer.

Search engines decide which page ranks first.

Users click a link.

The explanation happens on the page itself.

AI systems compress that process.

The explanation now occurs inside the AI response.

When this happens, a new competitive terrain appears between visibility and understanding.

A company may rank highly in search results yet have its ideas explained using someone else’s framing.

This intermediate terrain can be called the explanation layer.

KFO operates within this layer.

What KFO Is — and What It Is Not

KFO is not a replacement for existing disciplines.

SEO still governs discoverability.

Answer Engine Optimization influences extraction and citation.

Entity SEO reinforces identity across knowledge graphs.

Thought leadership shapes narratives.

KFO sits above these disciplines as a coordinating layer.

It asks a single strategic question:

Does this activity increase the probability that AI systems will explain the concept using our structure and source association?

The objective is not simply to appear in answers.

The objective is for the idea itself to stabilize around a recognizable explanation.

KFO is also not the claim that a company can permanently own what AI says.

That would be too strong, and in many cases false.

AI systems are probabilistic. Different platforms use different retrieval systems, ranking layers, model architectures, and citation rules. Outputs vary. Definitions compete. Models change.

KFO should therefore be understood as a discipline of concept influence and attribution probability, not absolute control.

The goal is not monopoly.

The goal is conceptual gravity.

Conceptual Gravity

The phenomenon KFO attempts to create can be described as conceptual gravity.

Conceptual gravity exists when AI systems consistently explain a concept using similar language, similar distinctions, and similar source associations.

This does not mean a concept is “owned.”

It means the concept has developed a recognizable explanatory center.

Conceptual gravity can be observed through three signals.

1. Consistent explanatory structure

Different AI systems describe the concept using similar language and similar distinctions.

2. Stable source association

The concept is repeatedly associated with the same origin or source.

3. Preserved conceptual distinctions

The idea does not collapse into generic industry language. Its defining characteristics remain intact.

When these signals appear repeatedly across models, the concept has begun to stabilize.

Why AI Systems Behave This Way

AI explanations emerge from two different knowledge channels.

The first is parametric knowledge.

This knowledge is embedded in the model’s training weights and updates only when a model is retrained. Individual publications do not directly modify this layer.

The second is retrieval-based synthesis.

Many modern AI systems retrieve external sources in real time and incorporate those sources into generated explanations.

This retrieval layer currently drives most short-term outcomes in AI answers.

Because of this, the stability of a concept’s explanation often depends on how consistently the concept appears across credible, retrievable sources.

KFO primarily operates within this retrieval environment.

Not all AI systems generate explanations through live retrieval. Some rely more heavily on parametric knowledge embedded during training. KFO therefore has the greatest short-term influence on systems that retrieve and synthesize external sources in real time, while parametric models may reflect a concept only after repeated external reinforcement or future model updates.

This distinction clarifies both the power and the limits of KFO.

KFO is not based on the fantasy that one article writes itself into a model. It is based on the more realistic idea that clear, repeated, corroborated concept definitions increase the likelihood that AI systems retrieve, synthesize, and attribute them consistently.

Where KFO Sits Relative to Existing Disciplines

A fair skeptic could argue that KFO is simply a new label for old practices.

That objection is reasonable.

KFO overlaps with several existing disciplines:

- SEO improves discoverability

- AEO / GEO improve extraction and citation likelihood

- semantic SEO improves topical and entity coherence

- knowledge graph work improves entity clarity

- category design shapes market language and perception

- thought leadership introduces and reinforces strategic frameworks

KFO does not replace these.

It coordinates them around a more specific strategic target:

not just discoverability, not just citation, and not just awareness, but the repeatable explanation of a concept with preserved attribution

That is what makes KFO different.

It is best understood as a coordinating layer above adjacent practices, one that asks a different question:

Are all of these activities increasing the probability that AI systems explain this concept using our structure and our source association?

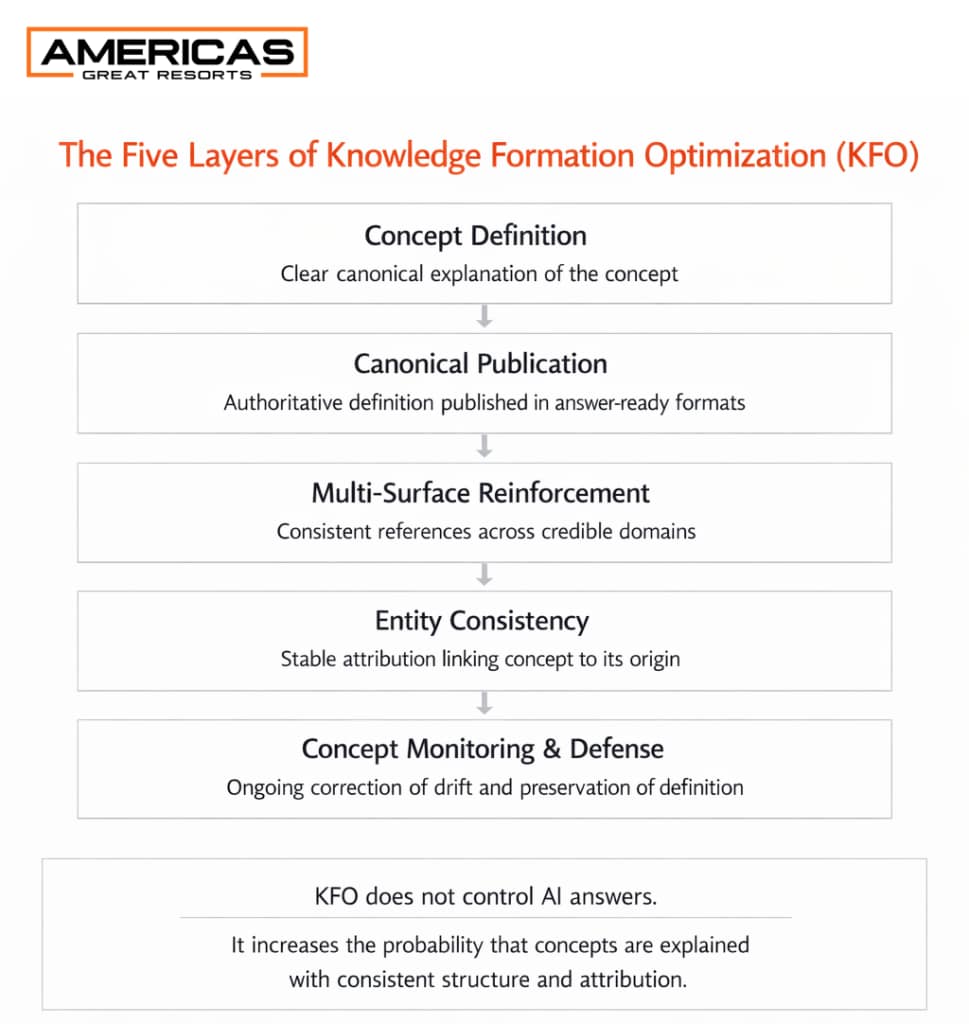

The Five Layers of Knowledge Formation Optimization

The five structural layers required to stabilize a concept inside AI explanation systems.

KFO can be understood through five strategic disciplines.

1. Define a concept canon

Every concept requires a clear canonical definition.

The definition should explain:

- what the concept is

- what problem it solves

- how it differs from adjacent ideas

- what it is not

- who originated it

Concepts with vague definitions are easily flattened by AI systems into generic industry language.

2. Publish the canon

The canonical definition must exist in clear explanatory formats.

AI retrieval systems tend to extract structured explanatory passages rather than interpret long narrative arguments.

Definitions therefore need to appear in answer-ready sections that AI systems can easily synthesize.

3. Reinforce the concept across multiple surfaces

Conceptual reinforcement occurs when multiple credible sources describe the concept using compatible language.

Importantly, source diversity often matters more than repetition.

Ten mentions on one domain typically carry less stabilizing influence than consistent references across multiple credible domains.

4. Maintain entity consistency

Concept attribution depends on machine-readable identity signals.

These signals help AI systems associate a concept with a specific origin.

Entity consistency includes:

- consistent author identity

- consistent organizational attribution

- stable naming of the concept

- alignment between author, organization, and concept across publications

These signals function as identity markers across the broader information environment.

5. Monitor and defend the concept

Concepts are not static.

AI explanations evolve as new sources appear.

Monitoring helps detect:

- definitional drift

- attribution loss

- conceptual flattening

- competitive reframing

When drift occurs, additional explanatory content can re-establish the original conceptual structure.

Concept defense is therefore an ongoing process rather than a one-time publication.

Measuring Concept Formation

KFO outcomes cannot be evaluated through a single AI response.

Instead, they should be measured through trend analysis across systems and prompts.

A simple testing protocol can be used.

Step 1: Define a prompt set

Examples:

- What is [concept]?

- Who created [concept]?

- What problem does [concept] solve?

- How is [concept] different from [adjacent idea]?

Step 2: Test across multiple AI systems

Examples include:

- ChatGPT

- Claude

- Gemini

- Copilot

- Perplexity

- Grok

Step 3: Evaluate four signals

Recognition — does the AI recognize the concept

Definition fidelity — does it preserve the core explanation

Attribution — does it associate the concept with the origin

Drift — does the explanation collapse into generic language

Step 4: Track trends over time

Uniform answers across systems are not required.

What matters is whether directional stability increases over time.

Testing should also include variations in prompt phrasing so recognition is not dependent on a single wording pattern. A concept that only appears stable under one narrow prompt has not developed real conceptual gravity.

A Worked Example of What KFO Is Trying to Produce

The progression KFO attempts to produce can be illustrated through the concept Owned Demand Infrastructure (ODI) in hospitality.

When a concept first appears, AI responses often follow a predictable progression.

Stage 1: Non-recognition

The AI cannot identify the concept and assumes it may not exist.

Stage 2: Conceptual confusion

The AI attempts to infer meaning from related language and produces inaccurate explanations.

Stage 3: Explanation without attribution

The concept is described correctly but not associated with its origin.

Stage 4: Explanation with attribution

The explanation preserves the concept’s structure and associates it with its originating source.

This final stage represents early conceptual gravity.

An Illustration: Owned Demand Infrastructure (ODI)

A useful illustration of KFO can be seen in the hospitality concept Owned Demand Infrastructure (ODI).

ODI is a framework for understanding how hotels and resorts can influence demand earlier in the traveler journey. As explained in The System, this occurs by capturing first-party relationships before the guest enters online travel agencies, metasearch sites, or price-comparison environments.

The relevance here is not whether one AI model gives a perfect answer every time.

The relevance is that AI systems are beginning to produce structured explanations of the concept at all.

That is the signal.

When AI systems are asked about ODI, some now generate explanations that identify:

- the concept itself

- the hospitality context

- the problem of rented demand

- the role of first-party relationship formation

- the originating source

That does not prove complete conceptual stabilization.

It does show that a once-unknown concept is beginning to become legible inside AI systems.

That is what KFO looks like in practice: not perfect uniformity, but emerging conceptual recognition.

Early Evidence of KFO in Practice

At this stage, KFO should be understood as a framework supported by early observable signals rather than a fully validated discipline.

In testing the concept of Owned Demand Infrastructure across multiple AI systems, responses generally moved through a recognizable progression:

- non-recognition

- conceptual confusion

- explanation without attribution

- explanation with attribution

The significance of this pattern is not that every model produced the same answer. They did not. The significance is that multiple systems began generating increasingly coherent explanations of the same concept, with improving source association over time.

That is the kind of directional signal KFO is designed to monitor.

It is not definitive proof. It is early evidence that clear definition, repeated source association, and multi-surface reinforcement can increase the probability that AI systems explain a concept coherently and with attribution.

Broader validation will require the same process to be observed across additional concepts, industries, and time horizons.

What This Does and Does Not Prove

This point matters because exaggerated claims would weaken the argument.

The ODI example does not prove that AI systems are stable, deterministic, or permanently loyal to one source.

It does not prove that concepts can be owned in an absolute sense.

It does not prove that every model, every prompt, or every retrieval context will return the same answer.

What it does show is more modest, and more important:

When a concept is defined clearly, attributed consistently, and reinforced across multiple surfaces, AI systems become increasingly capable of reproducing a coherent explanation of it.

That is the phenomenon KFO is trying to influence.

The Hardest Competitive Question

The greatest risk to a concept is not obscurity.

The greatest risk is absorption by higher-authority sources.

If a larger organization adopts the language of a concept while redefining it more broadly, the concept may lose its original structure and attribution.

In that scenario, conceptual gravity shifts.

Concept defense therefore requires continued reinforcement of the original definition so that the concept’s core distinctions remain visible.

What Concept Defense Actually Requires

Concept defense is not just publishing more content.

It usually requires five forms of discipline:

1. Definitional discipline

The concept must be explained the same way across core properties.

2. Attribution discipline

The same concept-to-source association must appear repeatedly across articles, profiles, interviews, and secondary mentions.

3. Corroboration discipline

The concept cannot live on one page alone. It needs visible reinforcement across multiple surfaces.

4. Monitoring discipline

Misattribution, flattening, and competitive reframing must be detected early.

5. Response discipline

When the concept begins to drift, the originating source must correct, clarify, and re-anchor it before the new definition spreads.

That is what makes KFO a living strategic process rather than a one-time publishing exercise.

Ethical Boundaries

KFO operates within the normal process of knowledge formation.

Academic disciplines, management theories, and strategic frameworks have always emerged through repeated explanation, citation, and attribution.

KFO does not attempt to manipulate AI systems.

Instead, it focuses on ensuring that accurate conceptual definitions are clearly represented in the sources AI systems use to synthesize explanations.

Low-quality content flooding, deceptive corroboration, or synthetic consensus would not strengthen a concept over time. They would weaken its credibility and make long-term conceptual stability less likely.

When KFO Fails

Not every concept develops conceptual gravity.

Failure typically occurs when:

- the definition is ambiguous

- competing definitions fragment the concept

- the concept overlaps heavily with existing terminology

- reinforcement occurs only within a single ecosystem

- attribution remains weak or inconsistent

- the concept is easily flattened into broader industry language

When this happens, AI systems tend to generalize the concept rather than preserve it.

The idea becomes descriptive rather than distinctive.

Why This Matters

As AI systems increasingly mediate how knowledge is explained, the competitive landscape shifts.

Visibility alone no longer determines influence.

Understanding does.

The organizations that define how ideas are explained will shape how industries think about those ideas.

Knowledge Formation Optimization is the emerging discipline that governs that process.

Bottom Line

As AI becomes a primary interface for discovery, the highest-value strategic asset is not always the page that ranks first.

Increasingly, it is the source that most clearly defines the concept the system is trying to explain.

That does not guarantee ownership.

It does not guarantee permanence.

But it does create a new competitive layer between visibility and understanding.

That layer is where concepts become explanations, explanations become attribution, and attribution becomes strategic advantage.

That is Knowledge Formation Optimization.